jmurphy

Well-known member

This script is designed to prepare calibrated frames for ImageIntegration. It adjusts the (brightness) scale and gradient of all frames to match the best frame. This has many benefits; for example:

The NormalizeScaleGradient script tries to solve the 'ill posed' issue by using extra data - photometry. The only cost is extra processing time... I have also tried to make it easy to use by calculating 'Auto' default values from the focal length, pixel size and image scale. It will therefore usually produce a good result with its default values.

However, this new script is an alternative, not a replacement to LN. NormalizeScaleGradient assumes that a single scale factor is valid for the whole image. If you have not applied a flat frame, this requirement will not be met, and you should use LN instead. NormalizeScaleGradient is also CPU intensive, so it is not ideal if you are in a rush. After processing the first few images, it estimates the remaining time. If you have a large number of images from a big sensor, you might have enough time to take the dog for a walk! The good news is it needs very little memory, no matter how many images are thrown at it.

How to use

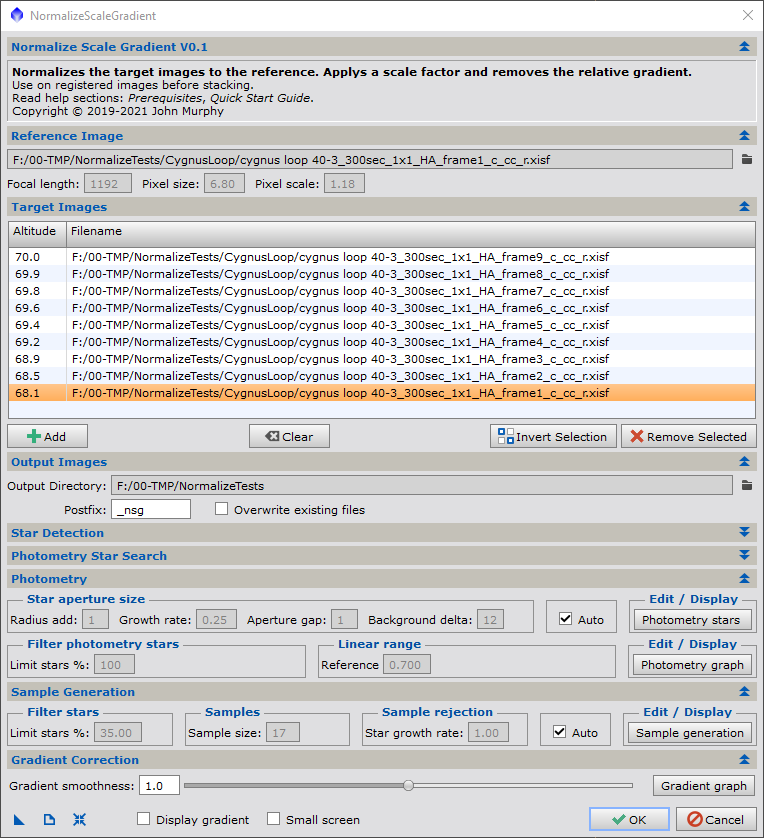

The input frames must be registered to each other. First load all the files using the 'Add' button. Note the altitude displayed on the left (blank if this FITS header does not exist). Set the reference frame to the frame with the smallest gradient. Select the worst frame (probably the one with the lowest altitude) and display the 'Gradient graph'.

The 'Gradient graph' displays the horizontal component of the gradient. Since light pollution is usually a smooth variation, this curve should also be smooth.

Select 'OK' and go and get a well deserved drink!

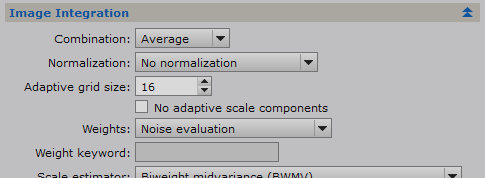

To stack the images, you need to set a few parameters in ImageIntegration, because your images have already been normalized and we don't want this work to be undone. I think the following settings are correct, but please let me know if they are not

'Normalization' should be set to 'No normalization'. We have already done that job!

However, the 'Weights' method needs to be selected. 'Noise evaluation' is a good option. An alternative is to use the Weight keyword 'WEIGHT' (added to the header by NormalizeScaleGradient).

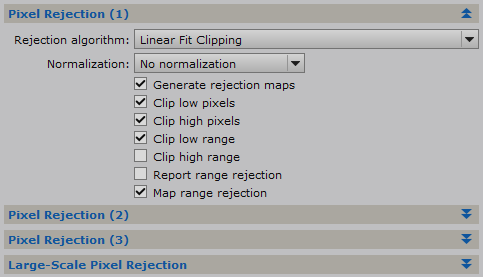

We also need to set the 'Pixel Rejection (1)' > 'Normalization' to 'No normalization'

Install

Unzip the script to a folder of your choice.

In the PixInsight SCRIPTS menu, select 'Feature Scripts...'

Select 'Add' and navigate to the folder.

Select 'Done'

The script will now appear in 'SCRIPTS > Batch Processing > NormalizeScaleGradient'

See message #10 for more details on this script.

See message #47 for the script.

Regards, John Murphy

- More precise data rejection. It will make it easier to reject satellite trails, hot pixels and cosmic ray strikes.

- The final stack image will only contain the gradient from the best frame.

The NormalizeScaleGradient script tries to solve the 'ill posed' issue by using extra data - photometry. The only cost is extra processing time... I have also tried to make it easy to use by calculating 'Auto' default values from the focal length, pixel size and image scale. It will therefore usually produce a good result with its default values.

However, this new script is an alternative, not a replacement to LN. NormalizeScaleGradient assumes that a single scale factor is valid for the whole image. If you have not applied a flat frame, this requirement will not be met, and you should use LN instead. NormalizeScaleGradient is also CPU intensive, so it is not ideal if you are in a rush. After processing the first few images, it estimates the remaining time. If you have a large number of images from a big sensor, you might have enough time to take the dog for a walk! The good news is it needs very little memory, no matter how many images are thrown at it.

How to use

The input frames must be registered to each other. First load all the files using the 'Add' button. Note the altitude displayed on the left (blank if this FITS header does not exist). Set the reference frame to the frame with the smallest gradient. Select the worst frame (probably the one with the lowest altitude) and display the 'Gradient graph'.

The 'Gradient graph' displays the horizontal component of the gradient. Since light pollution is usually a smooth variation, this curve should also be smooth.

Select 'OK' and go and get a well deserved drink!

To stack the images, you need to set a few parameters in ImageIntegration, because your images have already been normalized and we don't want this work to be undone. I think the following settings are correct, but please let me know if they are not

'Normalization' should be set to 'No normalization'. We have already done that job!

However, the 'Weights' method needs to be selected. 'Noise evaluation' is a good option. An alternative is to use the Weight keyword 'WEIGHT' (added to the header by NormalizeScaleGradient).

We also need to set the 'Pixel Rejection (1)' > 'Normalization' to 'No normalization'

Install

Unzip the script to a folder of your choice.

In the PixInsight SCRIPTS menu, select 'Feature Scripts...'

Select 'Add' and navigate to the folder.

Select 'Done'

The script will now appear in 'SCRIPTS > Batch Processing > NormalizeScaleGradient'

See message #10 for more details on this script.

See message #47 for the script.

NormalizeScaleGradient: Bookmark website now!

The negative value truncation is always due to cold pixels. Truncating it to 0.0 actually helps because ImageIntegration will ignore all zero pixels. The next version will only output warnings for high truncations. Fixing the high truncations is easy. In this example use PixelMath to divide...

pixinsight.com

Regards, John Murphy

Last edited: