1 Introduction

[hide]

Released 2023 November 12

In this document we will describe the Multiscale All-Sky Reference Survey (MARS), a new project for the correction of additive gradients in astronomical deep-sky images based on observational data, which will be the basis of the next generation of gradient correction algorithms and tools in PixInsight. The current gradient correction techniques face three problems:

- First, gradient correction is now a plain guess. We define a gradient model by recognizing areas inside the image that are supposed to be free of extended objects. But these techniques, even—in the worst case—if driven by deep-learning algorithms, are entirely subjective. In most cases, it is impossible to disentangle gradients from the diffuse structures of an extended nebula. This converts the gradient correction process into a very subjective task.

- Secondly, while today it is possible to model multiplicative gradients accurately using Gaia photometric and spectral data as reference, we have no robust reference for additive gradients. Due to the first problem described above, the effective correction of this kind of gradient is currently impossible except in very straightforward cases.

- In third place, image processing techniques needing a reference image lack a proper, solid referencing system. A good example is mosaic assembly. Nowadays, a single tile acts as the initial reference from where the entire mosaic is built. Following this path, a mosaic cannot be constructed reliably since the newly added tiles must be adapted to the existing partial mosaic, which has its own gradients. On the other hand, this assembly technique cannot reconstruct the object structures globally in the mosaic since we are adapting the mosaic tile by tile. This problem can only be solved if we have a gradient-free reference at the scale of the entire mosaic, allowing us to assemble the mosaic in one go.

In the PixInsight Development Team, we only know one reliable technique that allows us to correct additive gradients objectively. PTeam member Vicent Peris has developed this technique in his Multiscale Gradient Correction article. We now want to generalize it by converting the entire sky into a multiscale reference system. This reference will be used by the first objective techniques to correct additive gradients. It will be based on accurate observational data, making current techniques obsolete or, in the best case, simply accessory.

2 Multiscale Gradient Correction

[hide]

The multiscale gradient correction technique is based on observational data rather than a software-based solution. The method is founded on a simple idea: provided that the gradients are large-scale structures, they become insignificant at a local level in the image.

Gradients affect the image at a global scale. To correct them, we have to deal with different local color and brightness biases. It can be challenging to achieve a homogeneous image, especially if we have low surface brightness and diffuse nebulae populating the image's background.

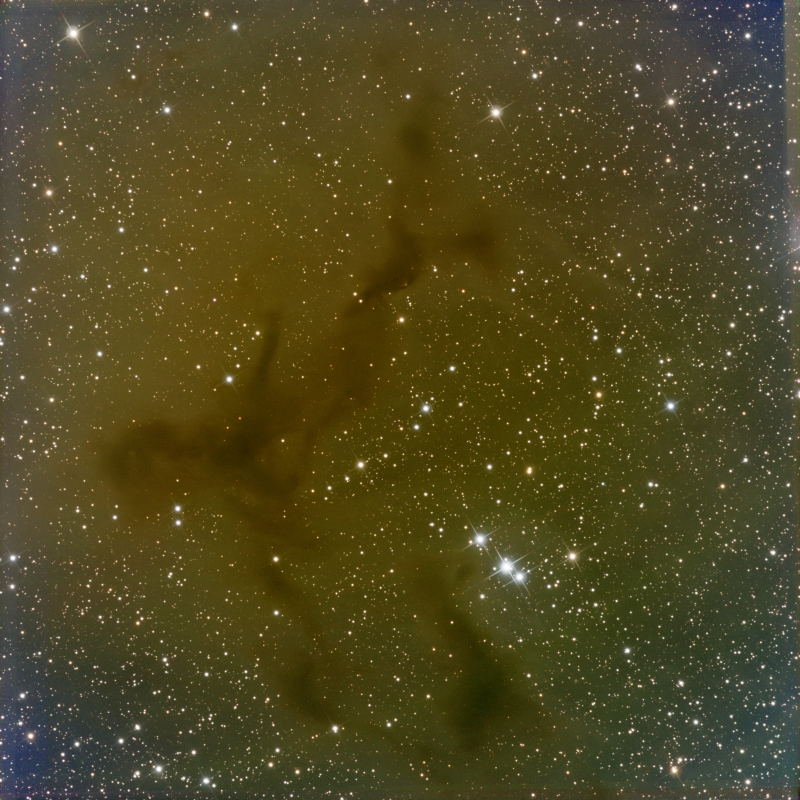

Instead, in a local environment, a gradient behaves as a constant shift in color and brightness. This is shown in the following example. We have a broadband image of PuWe 1 acquired with a 20-inch telescope having a 3400 mm focal length:

Image provided by OAUV / OAO.

The same object, photographed with a 400 mm focal length, occupies only a small part of the image:

Image provided by OAUV / OAO.

As described above, the wide field image has its own global-scale gradients. A software-based gradient correction applied with the Dynamic Background Extraction (DBE) process in PixInsight offers, as expected, a partial correction:

Image provided by OAUV / OAO.

But what is important is that the residual gradients are almost insignificant at a local level. This can be seen if we register the narrow-field image to the wide-field one:

Images provided by OAUV / OAO.

The multiscale gradient correction technique benefits from this fact to achieve a superior gradient correction, given that there is no need to guess where the sky background is located in the image. A simple comparison between the wide-field and the narrow-field images performs the correction. We can see below a comparison of the correction level achieved with this methodology as compared to a traditional, software-based technique:

Image provided by OAUV / OAO.

3 Generalization of the Multiscale Gradient Correction Algorithm

[hide]

The multiscale gradient correction technique can be generalized starting from very wide-field images. The following example is a gradient correction performed in two steps using 35, 400, and 3400 mm focal lengths. The 35 mm focal length image shows the entire Cygnus constellation:

Image provided by Sedat Bilgebay.

This image was acquired with a Canon EOS R and a Sigma 35 mm f1.4 DG HSM Art lens stopped down to f/2.5 with 15x4 minute exposures.

We use an intermediate focal length with a Canon EF 400 mm f2.8L IS III USM lens and a Finger Lakes Microline ML16200 camera with RGB filters. The figure below shows the 400 mm image aligned to the 35 mm image:

Images provided by Sedat Bilgebay and OAUV / OAO.

By comparing these images, we are able to correct the gradients inside the 400 mm focal length image:

Image provided by OAUV / OAO.

This intermediate image corrects the Barnard 150 image acquired with a PlaneWave 20-inch CDK at 3400 mm focal length. The CDK image was acquired with a Finger Lakes Proline 16803 and Johnson BVR filters.

Images provided by OAUV / OAO.

The 400 mm focal length image is able to correct the complex gradients inside the CDK image:

Image provided by OAUV / OAO.

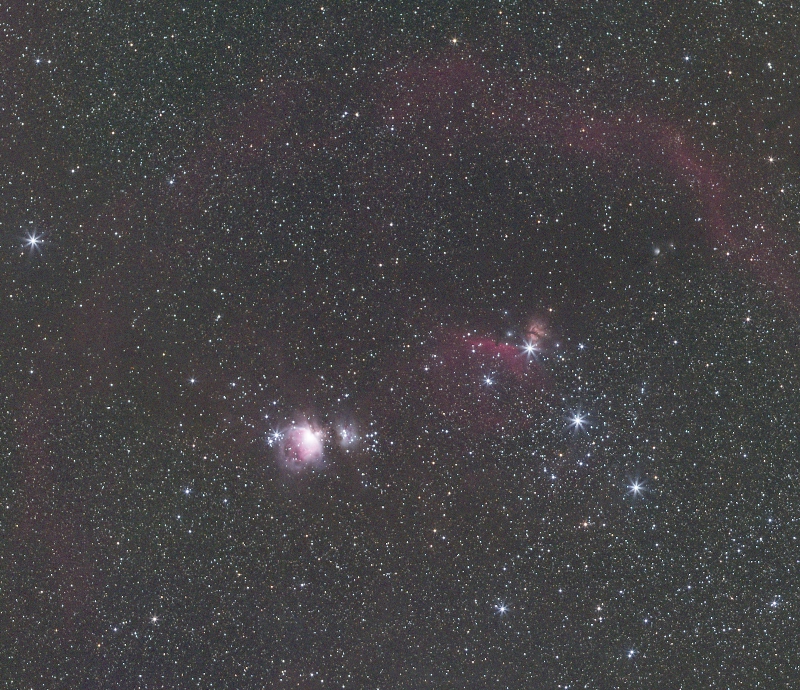

Very wide-field images can also correct gradients in intermediate focal length images. For instance, the following Orion image can effectively correct the gradients in a 300 mm focal length refractor:

Orion wide field image. Image provided by Sedat Bilgebay.

Image provided by Dustin Haertel.

This wide-field image of Taurus and Perseus constellations can be used to correct the gradients in an image of M45 acquired at 640 mm focal length:

Taurus and Perseus wide field image. Image provided by Sedat Bilgebay.

Image provided by Edoardo Luca Radice.

4 The Multiscale All-Sky Reference Survey (MARS)

[hide]

Based on the generalized multiscale gradient correction concept, we will build an all-sky surface background reference capable of correcting additive gradients in the range of fields of view deep-sky imagers work. The survey data will also be used to build mosaics from a single, robust reference.

To achieve the needed all-sky coverage, we propose two different strategies for data collection:

- A user-contributed MARS survey (MARS-u).

- A PixInsight-Team-contributed MARS survey (MARS-pi).

4.1 The MARS-u Survey

Nowadays, PixInsight has an extensive user community. To speed up the data collection process necessary to release the next generation of gradient correction tools, we want to engage our user community by contributing their data.

We are asking all the PixInsight users to contribute with wide-field images having a field of view between 3 and 50 degrees. All the user-contributed data will pass through a quality control and processing pipeline designed by the PixInsight Development Team that will allow the generation of a top-quality, all-sky surface brightness map at a record speed. This way, each user's data will help correct other users' images having smaller fields of view.

We are building a dedicated website for the MARS project. In very short, you'll be able to upload your data. Your contributed images must meet the following requirements:

- The images can be acquired with mono or color cameras (RGB bands). In the case of mono cameras, we also ask for narrowband H-alpha, [O-III], and [S-II] images.

- In the case of broadband images, they should have been acquired from a sky with a minimum darkness quality (Bortle scale 1 to 4).

- The images must be completely preprocessed master lights with no additional processing. We need your original masters without any gradient correction.

- The master lights must be generated with drizzle (even if you use drizzle x1). This is especially true for color cameras since drizzle preserves the validity of the stellar photometry.

All the contributed images will go through our processing pipeline to know whether they contain valid data for the survey and to properly fit them into the existing survey data.

The user-contributed data will be used exclusively to generate the surface brightness map that the gradient correction and mosaic assembly tools will access. On our website, we will also release an all-sky map showing the up-to-date completeness of the survey so each user can contribute with new data from sky regions where it is most needed.

4.2 The MARS-pi Survey

MARS is an endless project. The more data we collect, the better gradient correction we'll achieve. The PixInsight Development Team is investing significant resources by installing a remote observing station located in AstroCamp (southern Spain) under very dark skies to collect high-quality surface brightness data that will enhance the performance of the tools in the mid and long term.

The observing station is comprised of the following equipment:

- 50-degree field of view camera: 35 mm focal length lens with a full-frame mono CMOS camera. Full hemisphere coverage in 56 pointings. Initial exposure time per pointing of 1 hour.

- 15-degree field of view camera: 135 mm focal length lens with a full-frame mono CMOS camera. Full hemisphere coverage in 198 pointings. Initial exposure time per pointing of 2 hours.

- Both cameras are equipped with broadband RGB filters and narrowband SHO filters.

- A future telescope with a field of view of 5 degrees is under plans to reach further correction and depth in the mid and long-term.

- An on-site processing computer to automate the preprocessing pipeline of the survey.

MARS-pi aims to generate a congruent map up to scales of 25 degrees. The MARS-pi data will be an all-sky, top-quality surface brightness map that will complement the MARS-u data set. In the mid-term, MARS-pi data will serve as the reference for the MARS-u data to have an all-sky map with an optimal depth.

The MARS-pi survey will be a bracketed data set with fields of view from 5 to 50 degrees using multiple telescope and lens assemblies. It will cover the entire sky with broadband RGB and narrowband SHO filters and is starting to collect data in the upcoming months.

4.3 MARS Products and Related Tools

The main product of the MARS project will be a database that will complement the Gaia catalog to normalize the images at local or global scales accurately. With Gaia performing the multiplicative normalization and MARS performing the additive normalization, images will be corrected and fitted using observational data only.

The MARS product will be an all-sky grid of samples with the additive light contribution. This means that we are not building a database defining where the sky background is but a database defining the amount of light from extended objects in any given direction. Therefore, the next generation of gradient correction tools in PixInsight won't need any sample placement or any subjective decision to create a gradient model. The new tools will remove gradients in the entire image by modeling them not only on sky background areas but also over the extended objects, working in a completely automated way. This applies both for additive and multiplicative gradients, with the help of the Gaia catalog.

As previously told, the same procedure will be applied to mosaic construction. Both Gaia and MARS will enable us to build mosaics in a single run by having a common and robust reference over the entire field of view. This will prevent any extrapolation error from the reference being always a portion of the complete mosaic.

In the mid and long term, further MARS data releases will improve the survey's depth, enabling the correction of deeper images and gradients over images acquired with longer focal lengths. We expect to be able to correct images up to 1500 mm focal length in the first data release.

5 Conclusion

[hide]

Current gradient correction and mosaic building techniques need a complete revision. Our commitment to being at the forefront of the technique requires us to rethink how we approach this problem. The MARS project is born from our desire to no longer rely on subjective (neither human nor artificial-based) interpretations to produce a pristine image that meets our and our users' quality standards.

Copyright © 2023 Pleiades Astrophoto, WeDoArt Productions