SkyAnd

Active member

Hi everyone, hope that someone can help me with my SNR and Statistics (Process) issues....

I use an QHY 268-C OSC with Astronomik L2 UV/IR Cut Filter on a APM 140/980 (f7) refractor. A bortle 4 sky is typical in my backyard , fairly good conditions for an astro photographer. PixInsight is my main stacking and post processing software and I use it for nearly 6 month.

I tried to find out a lttle bit more about the SNR results of my pictures, using the Statistic process and simply dividing the MEAN by the StdDev. I was very surprised, when I recognized, that the value of StdDev doesn´t change threw the calibration and stacking process - for that reason the calc. SNR is bad.

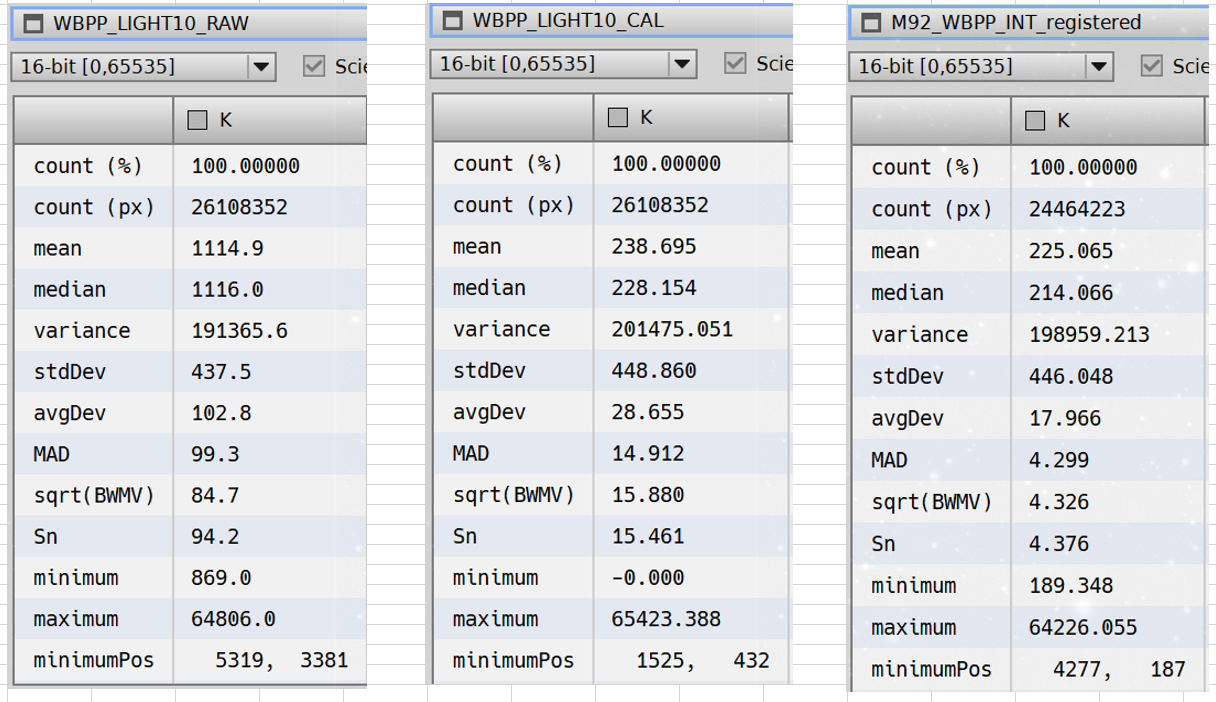

See the following example with 300 sec (M92) - DSO Mode, Gain 26, Offset 50, Chip Temp -10°C - RAW single frame left, CAL single frame in the middle (MasterDark with app. Mean = 850 ADU), and INT stack on the left side (only 13x 300 sec = 65 min) RGB->Grey

The StdDev never changed, but the AvgDev and the MAD decreases in the process - is AvgDev the figure I have to use instead of StdDev (what is the difference?) to get a better assumption for SNR = MEAN/AvgDev?

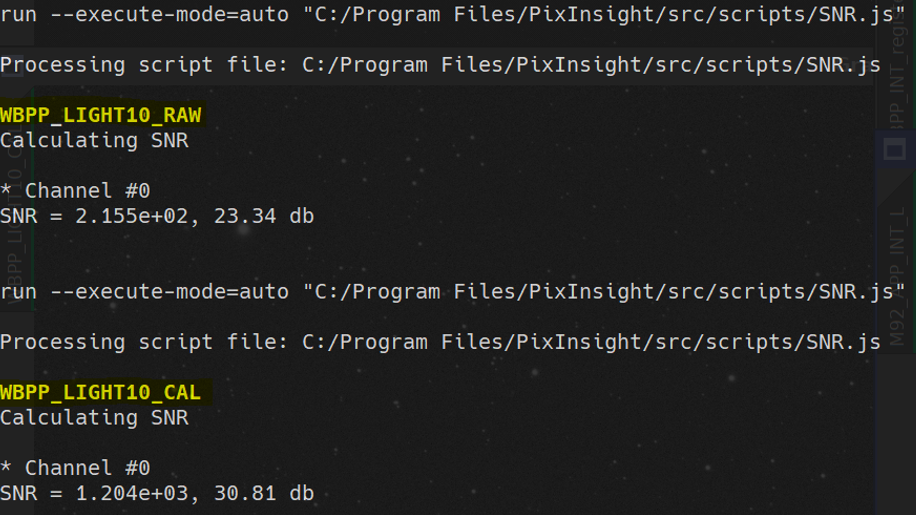

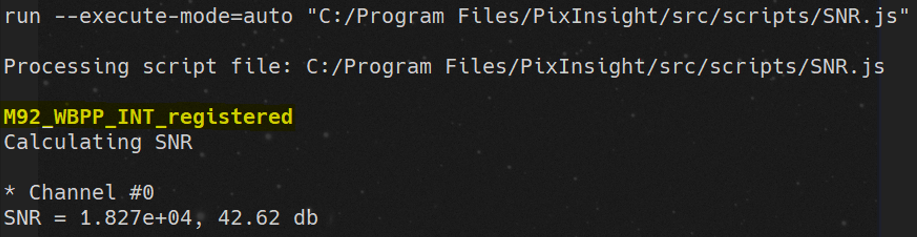

Using the SNR script of Hartmut Bornemann, the results are not so bad and get better and better threw the process - is this the better way to have a realistic comparison? Here are the figures:

Thanks a lot for your help

CS Andreas

I use an QHY 268-C OSC with Astronomik L2 UV/IR Cut Filter on a APM 140/980 (f7) refractor. A bortle 4 sky is typical in my backyard , fairly good conditions for an astro photographer. PixInsight is my main stacking and post processing software and I use it for nearly 6 month.

I tried to find out a lttle bit more about the SNR results of my pictures, using the Statistic process and simply dividing the MEAN by the StdDev. I was very surprised, when I recognized, that the value of StdDev doesn´t change threw the calibration and stacking process - for that reason the calc. SNR is bad.

See the following example with 300 sec (M92) - DSO Mode, Gain 26, Offset 50, Chip Temp -10°C - RAW single frame left, CAL single frame in the middle (MasterDark with app. Mean = 850 ADU), and INT stack on the left side (only 13x 300 sec = 65 min) RGB->Grey

The StdDev never changed, but the AvgDev and the MAD decreases in the process - is AvgDev the figure I have to use instead of StdDev (what is the difference?) to get a better assumption for SNR = MEAN/AvgDev?

Using the SNR script of Hartmut Bornemann, the results are not so bad and get better and better threw the process - is this the better way to have a realistic comparison? Here are the figures:

Thanks a lot for your help

CS Andreas